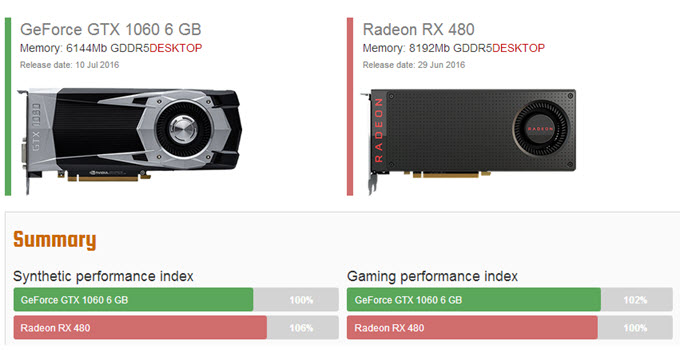

While in years past, GPUs were used for mining cryptocurrencies such as Bitcoin or Ethereum, GPUs are generally no longer utilized at scale, giving way to specialized hardware such as Field-Programmable Grid Arrays (FPGA) and then Application Specific Integrated Circuits (ASIC).

Beyond video rendering, GPUs excel in machine learning, financial simulations and risk modeling, and many other types of scientific computations. GPUs are best suited for repetitive and highly-parallel computing tasks. While individual CPU cores are faster (as measured by CPU clock speed) and smarter than individual GPU cores (as measured by available instruction sets), the sheer number of GPU cores and the massive amount of parallelism that they offer more than make up the single-core clock speed difference and limited instruction sets. Adding 4 to 8 GPUs to this same server can provide as many as 40,000 additional cores. In a server environment, there might be 24 to 48 very fast CPU cores. CPUs have large and broad instruction sets, managing every input and output of a computer, which a GPU cannot do. While GPUs can process data several orders of magnitude faster than a CPU due to massive parallelism, GPUs are not as versatile as CPUs. Designed with thousands of processor cores running simultaneously, GPUs enable massive parallelism where each core is focused on making efficient calculations.

A GPU is designed to quickly render high-resolution images and video concurrently.īecause GPUs can perform parallel operations on multiple sets of data, they are also commonly used for non-graphical tasks such as machine learning and scientific computation. The main difference between CPU and GPU architecture is that a CPU is designed to handle a wide-range of tasks quickly (as measured by CPU clock speed), but are limited in the concurrency of tasks that can be running.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed